When talking about accessibility, the question of how blind people interact with computers often comes up. This article will explain the basics and take OS X as an example to let you test an application. There are many tools to help people with low vision, for example magnifiers. Here it helps a lot when applications provide sufficient contrast and use clear fonts. But magnification can only get you so far, and at some point it's not enough to effectively use a computer anymore. This is where screen readers come into the picture.

A screen reader is an application that presents the screen contents to the user in a non-visual way, either using speech output or using braille displays. For testing it is usually enough to deal with the speech output of the screen reader, braille will usually work if speech works. OS X comes with VoiceOver out of the box which makes testing applications easy. For a full description of VoiceOver, head over to the documentation, we'll just mention the most essential keyboard shortcuts here. There is also a tutorial for VoiceOver that is a fun way to get started. To test a Qt application just run the application and turn on VoiceOver (Command-F5). For OS X, accessibility support in Qt has improved a lot lately, so make sure to use Qt 5.3 at least.

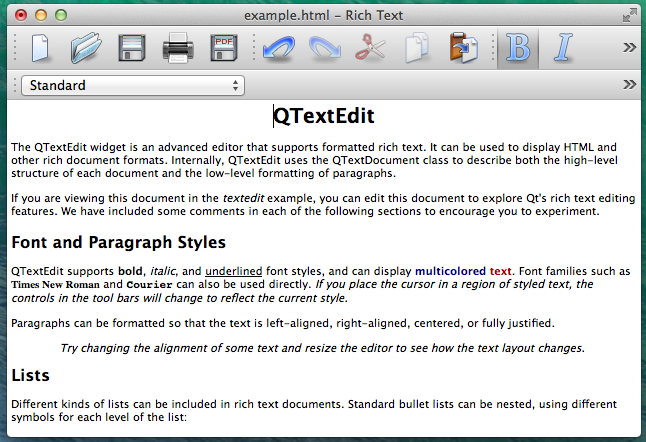

As an example application for this post we use the text editor example that ships with Qt (examples/widgets/richtext/textedit). Here is a screenshot of the window:

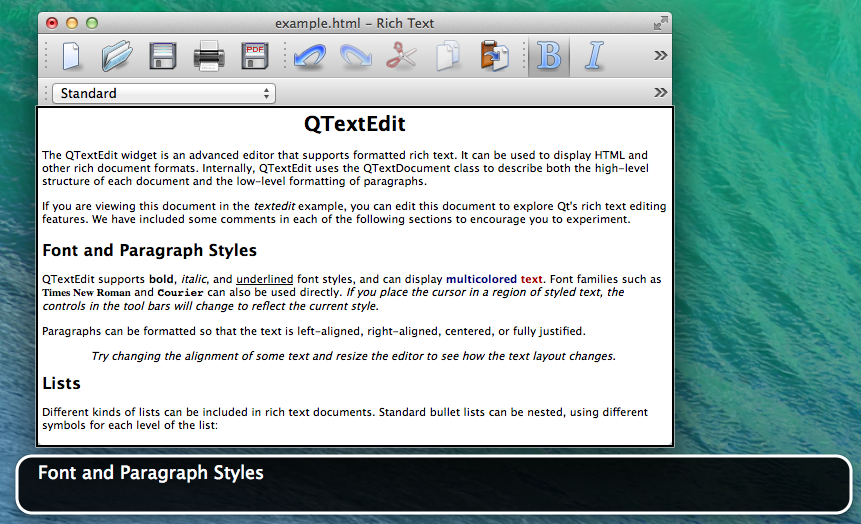

When moving the text cursor VoiceOver will read the current line when moving up and down and reads individual letters when moving the cursor left and right. For the screenshots an option to display what VoiceOver says graphically was enabled, this is also helpful while testing.

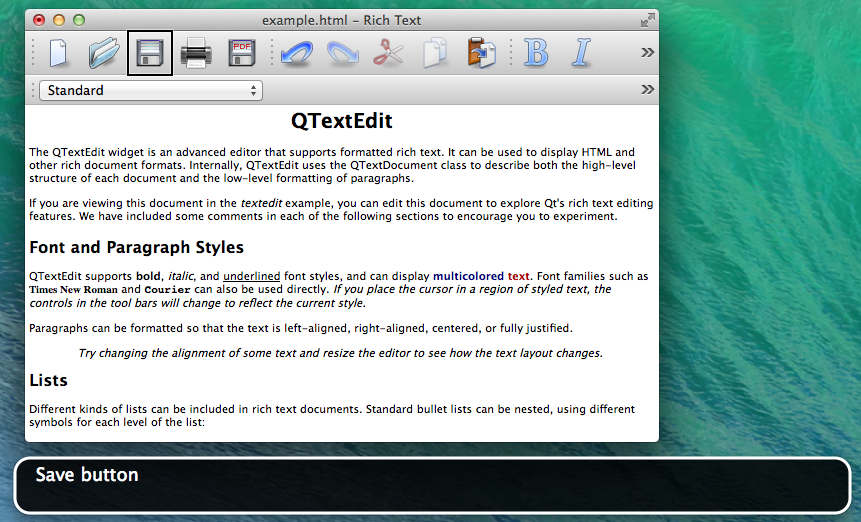

To move around and explore the window VoiceOver has some shortcuts that control a virtual focus. The focus is visualized with the black rectangle to help sighted people understand what is going on. Pressing Control-Alt-Left or -Right moves the VoiceOver cursor from one widget to the next. This is a good way to test that all UI elements show up and have sensible labels. Take for example the tool bar. The buttons have icons only and yet they should convey their meaning when read with the screen reader. Here is an example of VoiceOver reading the save button:

If you encounter unlabeled widgets that is usually easy to fix by calling QWidget::setAccessibleName() with the right string or using Qt Designer to type in the text as property. The name has to be a short title for the widget (such as "Save"). It conveys the same information that the widget's icon gives for example. Screen readers will know that it's a button and add that information. When additional hints are needed, accessibleDescription should be set just like name, this information will be used as additional hint that is usually read after announcing the element (such as "Saves the current document").

There are still many things to consider, but following best practices and conventions when designing applications often gets most of the work done. Then some manual testing should follow and finally user feedback needs to be taken into account. For complex custom QWidgets it is necessary to write sub-classes of QAccessibleInterface, which is material for another post. For Qt Quick there is the Accessible attached property. It's documentation will be improved in Qt 5.4, thus the link to a doc snapshot. The Qt Quick Controls come with good preset properties so that most things just work, similar to QWidget. Sometimes it is still necessary to tweak a few things or to add accessibility for custom controls.

Testing on other platforms and the details such as writing custom QAccessibleInterfaces will be discussed in later posts.