Qt Multimedia in Qt 6

The first beta of Qt 6.2 has just been released and features amongst multiple other new Add-ons a brand new Qt Multimedia module.

Qt Multimedia is a module that has undergone some rather large changes for Qt 6. In many ways, it’s a new API and implementation even though we re-used some of the code from Qt 5.15.

While we have tried to keep as much source compatibility between Qt 5 and Qt 6 for most of our modules, we had to do large changes here to make the API and implementation fit for the future and in the end decided to aim for the best possible API rather than maximum compatibility. If you have been using Qt Multimedia in Qt 5, you will need to do changes to your implementation.

This blog post will try to walk you through the biggest changes, looking both at the API and internals.

Goals

Qt Multimedia in Qt 5 had a rather loosely defined scope. Support for different parts of the API wasn't consistent across different backends, and parts of the API itself were not easy to use in a cross-platform way.

For Qt 6, we tried to narrow down the scope to some extent and are working on a consistent set of features that works across all supported platforms. We are not quite there yet with that goal, but hope to have filled most implementation gaps by the release of Qt 6.2.0.

The main use cases we wanted to support in Qt 6.2 are:

- Audio and video playback

- Audio and video recording (from Camera and Microphone)

- Low level (PCM based) audio and audio decoding

- Integration with Qt Quick and Widgets

- Use Hardware acceleration as much as possible

From what we can tell those features cover a large part of the use cases where our users have been using Qt Multimedia in the past. Our goal is to focus on those central use cases first and make sure they work consistently across all our platforms before extending the module with new features.

Internal architectural changes

Qt Multimedia in Qt 5 has a complex plugin-based architecture, using multiple plugins for different frontend functionality. A complete multimedia backend implementation would consist of no less than 4 plugins. The backend API towards used to implement those plugins was public making is difficult to adjust and improve the functionality of those backends.

That architecture made is very difficult to maintain and develop the module. With Qt6, we have chosen to significantly simplify this and removed the plugin infrastructure. The backend is now chosen at compile time and compiled into the shared library for Qt Multimedia. There is now only one backend API covering all of multimedia, removing the artificial split into multiple backends we had in Qt 5. Finally, we chose to make the backend API private so that we can easily adjust and extend it in the future.

Once that was done, we could have a close look at the APIs and interfaces that we needed towards the platform dependent backend code. We managed to reduce the set of classes that were needed to implement a multimedia backend from 40 to 15, and reduced the amount of pure virtual methods, providing a fallback implementation for many non-essential features.

The new backend API was modeled to some extent after the QPA architecture we’re using for our window system integration in Qt Gui, and the new QPlatformMediaIntegration class does now serve as a common entry point and factory class to instantiate platform dependent backend objects. In most cases we were now aiming for a 1 to 1 relationship between the classes in the public API and the classes implementing that functionality. So, the public QMediaPlayer API has a QPlatformMediaPlayer class implementing the platform dependent functionality.

With those changes we could also remove a lot of code that was duplicated between frontend and backend and avoid large amounts of call forwarding between them. With this, we could also move a lot of the cross-platform functionality and validation into the shared, platform independent section of the code.

Altogether, this greatly simplified our code base and lead to a large reduction in code size while not losing a lot of functionality. Where Qt Multimedia in 5.15 was around 140.000 lines of code, we are currently down to around 74.000 lines of code in Qt 6.

Supported backends

With Qt 6 we have also revisited the supported backends and reduced those to a set we believe can be supported in the future. In Qt 5, we had for example three completely different backend implementations on Windows, using DirectShow, WMF and a separate WMF based implementation for WinRT.

In Qt 6, the currently supported set is:

- Linux, using GStreamer

- macOS and iOS using AVFoundation

- Windows using WMF

- Android using the MediaPlayer and Camera Java APIs

Support for QNX is planned for Qt 6.3. We might also have low level audio working on WebAssembly in time for 6.2. In addition we still have code for low level audio support on Linux using PulseAudio or ALSA, but those are currently not tested or supported. Depending on demand we might bring those back in a later release.

Public APIs

The public API of Qt Multimedia consists of 5 large functionality blocks. Three of those blocks already existed in Qt 5, but the APIs inside those blocks have undergone significant changes. The functionality blocks are:

- Device discovery

- Low level audio

- Playback and decoding

- Capture and recording

- Video output pipeline

When doing the new API, we have also aimed to have a unified API between C++ and QML. This made it possible for us to remove a lot of code that was simply wrapping the C++ API and exposing it in a slightly different way to QML. For most public C++ classes, there is now a corresponding QML item with the same name. So QMediaPlayer does for example have a corresponding QML MediaPlayer item with the same API as the C++ class.

Let’s go a bit into more details for the different functionality blocks:

Device discovery

Let’s start with device discovery. The new QMediaDevices class is all about giving you information about available audio and video devices. It will allow you to list the available audio inputs (usually microphones), audio outputs (speakers and headsets) and cameras. You can retrieve the default devices and the class also notifies you about any changes to the configuration, e.g., when the user connects an external headset.

QMediaDevices devices;

connect(&devices, &QMediaDevices::audioInputsChanged,

[]() { qDebug() << “available audio inputs have changed”; }

Low level audio

This block of functionality helps doing low level audio using raw PCM data and reading or writing that directly to or from an audio device.

This block is architecturally still rather similar to what we had in Qt 5, but a lot of details have changed. Most notably, the low level classes to read from or write to an audio device have changed name. They are now called QAudioSource and QAudioSink. The naming reflects their low level nature, and freed up the old names we had in Qt 5 (QAudioInput and QAudioOutput) for use in the playback and capture APIs.

The QAudioFormat API has been cleaned up and simplified, now supporting the 4 most commonly used PCM data format (8 bit unsigned int, 16 and 32 bit signed int and floating point data). QAudioFormat has also gained new API to handle positioning information for audio channels, but that is currently not yet fully supported by the backends.

We have also removed the deprecated QSound class. QSoundEffect is its replacement for playing short sounds with low latency. QSoundEffect currently still requires you to use WAV as the format for the effects, but we are planning to extend this and allow also compressed audio data to be played back through the class after 6.2.

Playback

The main class for handling playback of media files is QMediaPlayer. The QMediaPlayer API has been simplified from what we had in Qt 5. We have for now removed all playlist functionality from the module, something that was built into the Qt 5 media player but has complicated its API and implementation. We are planning to bring back playlist functionality after 6.2 as a separate stand-alone class that you can then connect to QMediaPlayer if required. For now and if needed, you can find some code to handle playlists in the “player” example.

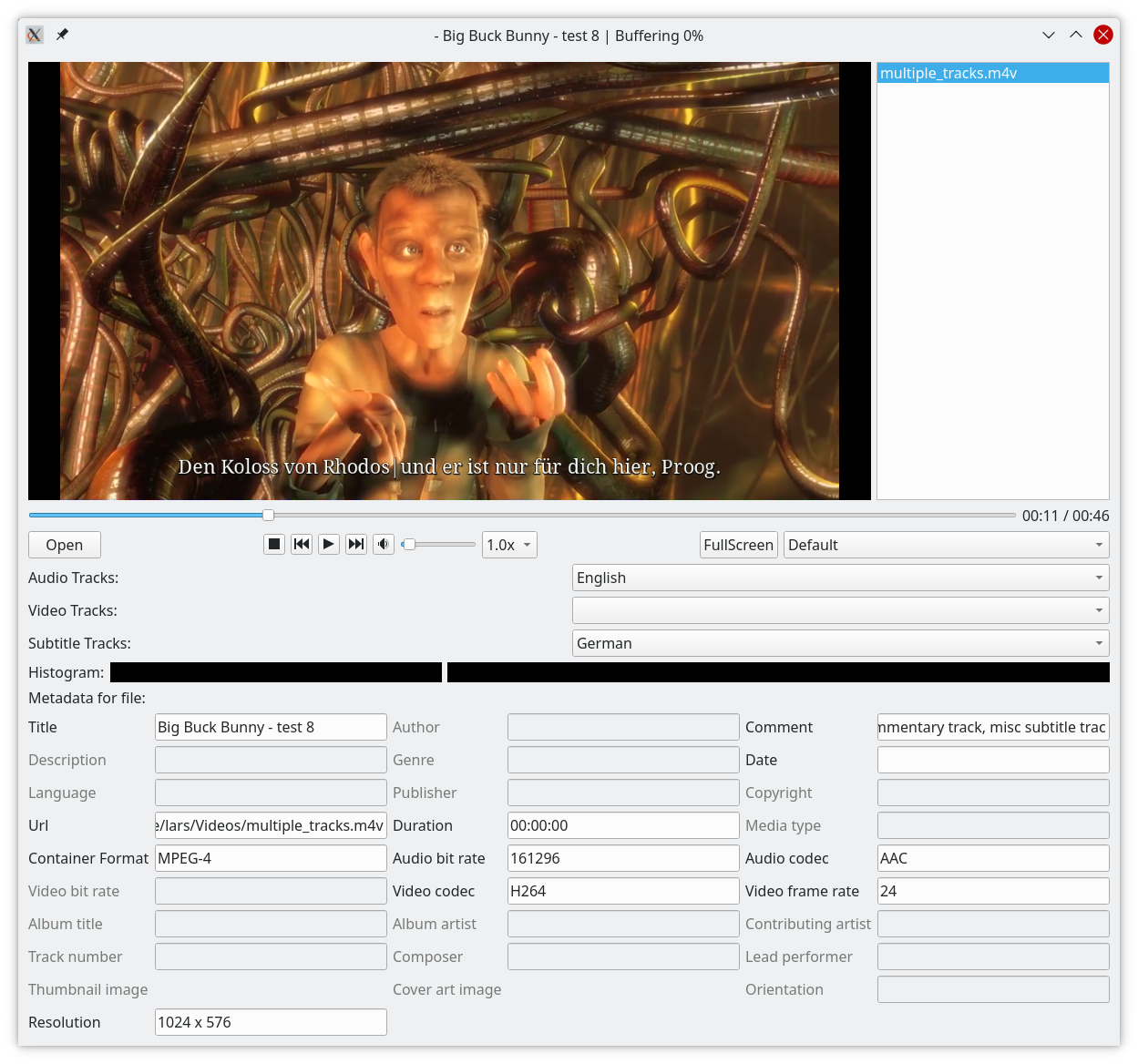

QMediaPlayer has on the other hand gained the ability to render subtitles and you can now inspect and select the desired audio, video or subtitle track using the setActiveAudioTrack(), setActiveVideoTrack() and setActiveSubtitleTrack() methods.

QMediaPlayer in Qt 6 requires you to actively connect it to both an audio and video output using the setAudioOutput() and setVideoOutput() methods. Not setting an audio output will imply that the media player doesn’t play audio. This is a change from Qt 5, where a default audio output was always selected. The change was made to allow for a symmetric API between audio and video and simplify the integration with QML

A minimal media player implemented in C++ looks like this:

QMediaPlayer player;

QAudioOutput audioOutput; // chooses the default audio routing

player.setAudioOutput(&audioOutput);

QVideoWidget *videoOutput = new QVideoWidget;

player.setVideoOutput(videoOutput);

player.setSource(“mymediafile.mp4”);

player.play();

The C++/Widgets based player example has a more complete implementation that also features support for subtitle and audio language selection as well as displaying meta data associated with the media file:

or using QML:

Window {

MediaPlayer {

id: mediaPlayer

audioOutput: AudioOutput {} // use default audio routing

videoOutput: videoOutput

source: “mymediafile.mp4”

}

VideoOutput {

id: videoOutput

anchors.fill: parent

}

Component.onCompleted: mediaPlayer.play()

}

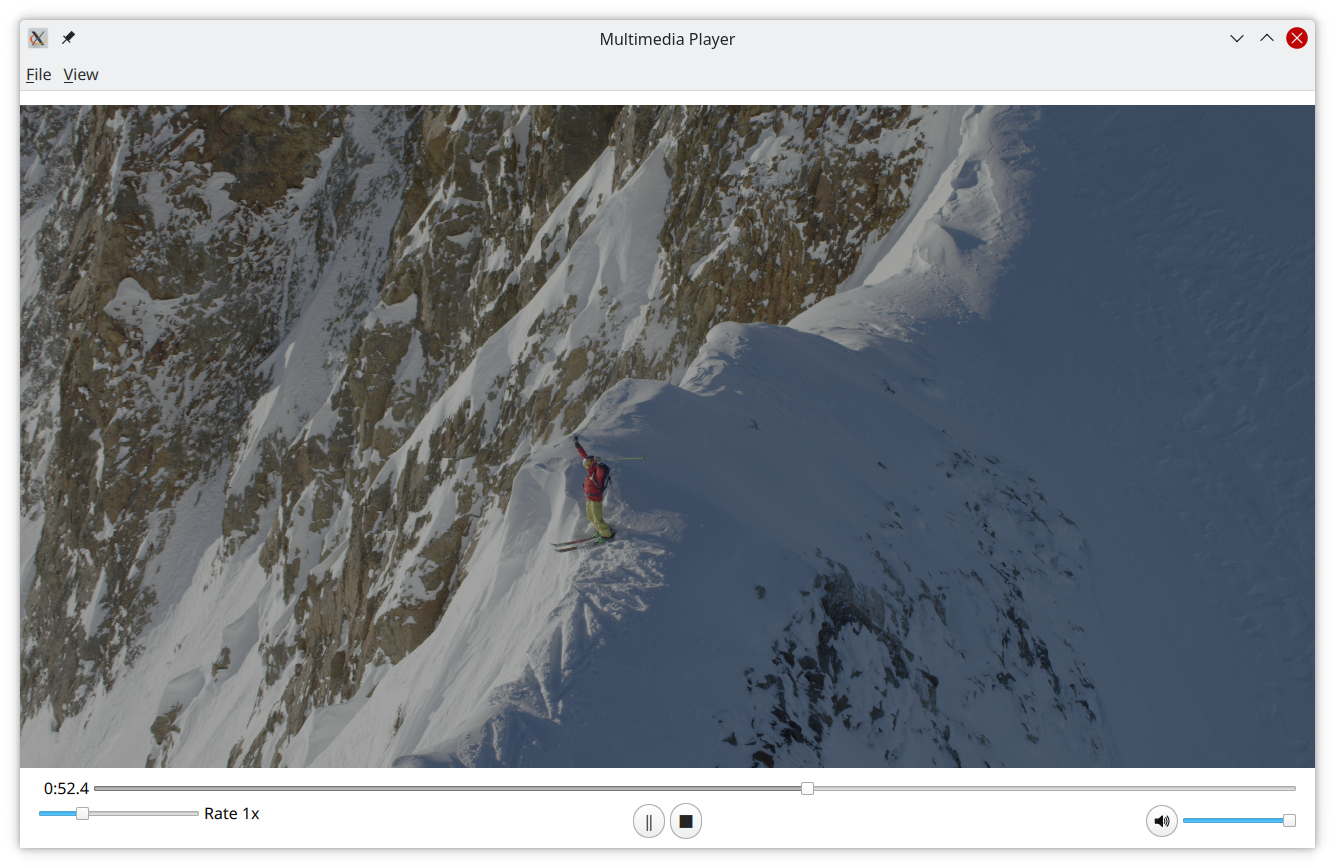

There's also a brand new QML based mediaplayer example using Qt Quick Controls available that you can play around with:

In addition to QMediaPlayer, Qt 6 also has cross platform support for decoding audio files into raw PCM data using the QAudioDecoder class. That functionality existed on some platforms in Qt 5 but was not implemented everywhere.

Capturing and recording

The capturing and recording functionality has undergone the largest API changes in Qt 6. Where in Qt 5, you had to magically connect a camera to a recorder, Qt 6 now comes with a more explicit API to setup a pipeline for capturing.

The central class here in Qt 6 is QMediaCaptureSession. This class is always required when recording audio/video or capturing an image. To setup the session for audio recording you can then connect an audio input to the session using setAudioInput() and if you want to record from the camera, use setCamera() to connect a camera to it.

One thing to note here is that QAudioInput and QCamera act as two input channels. The physical device to use is selected using QAudioInput::setDevice() or QCamera::setCameraDevice(). Once a device is selected QAudioInput and QCamera allow you to change properties of that device like setting the volume or the resolution and frame rate of the camera.

QMediaCaptureSession allows connecting both an audio and a video output to it for preview and monitoring purposes. To take a still image, connect a QImageCapture object to it using setImageCapture()

QMediaCaptureSession session;

QCamera camera;

session.addCamera(&camera);

QImageCapture imageCapture;

session.addImageCapture(&imageCapture);

camera.start();

imageCapture.captureToFile(“myimage.jpg”);

To record audio and video, connect a QMediaRecorder to the session. QMediaRecorder allows requesting a certain file format and codecs for recording by specifying a QMediaFormat. In Qt 6, we are not providing a cross-platform API here using enums for the different formats and codecs. As codec support is platform dependent, you can also query QMediaFormat for the set of supported file formats and codecs. The backend will also always try to resolve the requested format to something that is supported. So if you for example request an MPEG4 file with a H265 video codec, but H265 is not supported, it might fall back to H264 or some other codec that is supported.

QMediaRecorder recorder;

session.setRecorder(&recorder);

QMediaFormat format(QMediaFormat::MPEG4);

format.setAudioCodec(QMediaFormat::AudioCodec::AAC);

format.setVideoCodec(QMediaFormat::VideoCodec::H265);

recorder.setMediaFormat(format);

recorder.setOutputLocation(“mycapture.mp4”);

recorder.record();

In addition to setting the format, you can also set other properties on the encoder such as the quality, resolution and framerate.

Video pipeline

The video pipeline has been completely rewritten with Qt 6, trying to make it easier to use for custom use cases and allow for both full hardware acceleration of decoding and rendering as a well as receiving raw video data in software.

Most of this API is only accessible from C++, on the QML side, theres a VideoOutput QML element, that can however easily be connected to things like shader effects or can be used as a sourceItem for a material in Qt Quick 3D:

If you’re using Qt Widgets, the QVideoWidget class serves as an output surface for videos there.

For more low level access, the central class on the C++ side is QVideoSink. QVideoSink can be used to receive individual video frames from a media player or capture session. The individual QVideoFrame objects can then be either mapped into memory, where the user has to be prepared to handle a variety of YUV and RGB formats, can be rendered using QPainter or can be converted to a QImage.

Future work

After 6.2, there are a couple of items in our backlog that we will be looking into. Prioritization of those ideas has not been done yet, and feedback on what your needs are would help us here. Ideas from our side include amongst others:

- Support for multiple video outputs

- Support multiple cameras

- Support for multiple audio inputs

- Streaming audio/video

- Screen capturing

- Audio mixing

Currently, most of our work is however focused on bug fixing and making everything ready for Qt 6.2. Due to the large changes, there are still many rough edges in the implementation and some of the functionality might have bugs or missing features. We aim to have those fixed for 6.2.0, but will need your feedback to be able to do so.

The recently released beta of Qt 6.2 does have binaries for Qt Multimedia that you can easily try out and play around with. We'd appreciate any feedback, either here on the blog or at bugreports.qt.io.