TL;DR

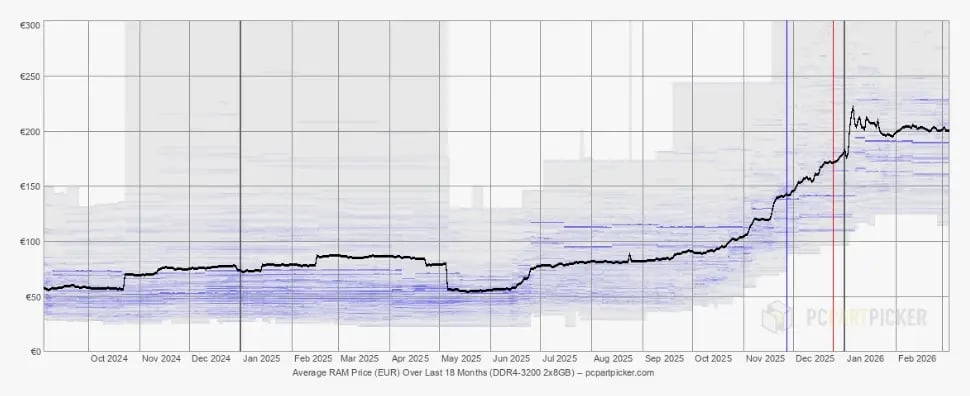

- RAM prices have more than doubled since mid-2025, driven by AI infrastructure demand that has reallocated production away from embedded and industrial markets, with no relief expected before 2027.

- For embedded systems, the consequences are uniquely severe: no swap memory, fixed ceilings, and safety-critical failure modes mean there is no fallback when RAM runs out.

- Software optimization, from asset compression to profiling discipline and framework choice, can reduce RAM footprints up to 85%, as Volkswagen and Verge Motorcycles have demonstrated after switching to Qt.

Memory prices are keeping R&D managers and CTOs awake at night, and for good reason. Between Q4 2025 and Q1 2026, conventional DRAM contract prices surged by more than 100%. For companies shipping connected devices at scale, that isn't an engineering footnote. It's a direct hit to the bottom line.

If you're building embedded products (industrial controllers, automotive HMIs, medical devices, or consumer electronics), RAM in embedded systems has become one of your most volatile cost drivers. And unlike a software bug, you can't patch your way out of a BOM problem after the fact.

Qt recently hosted a webinar on the memory crunch, highlighting the main reasons companies are facing these challenges now and how to position your product portfolio for long-term success.

Why The Memory Shortage Is Different This Time

When prices of a good spike, for example, the prices of RAM in embedded systems, it is usually a cyclical phenomenon: in a matter of weeks or months at worst, supply catches up with demand, prices correct, and the industry moves on.

This time, though, the dynamics are structural.

The world's three dominant memory manufacturers (Samsung Electronics, SK Hynix, and Micron Technology) have been redirecting production capacity toward High Bandwidth Memory (HBM) for AI accelerators and advanced DDR5 for data centers. These products command significantly higher margins.

The result is a zero-sum reallocation: every wafer that goes to an HBM stack for an AI server is a wafer that isn't producing the LPDDR or DDR4 that powers your embedded device. IDC describes this as potentially a permanent, strategic reallocation of global silicon wafer capacity, not a temporary disruption.

According to TrendForce, LPDDR4X and LPDDR5X contract prices (the memory types most commonly used in embedded Linux platforms) are projected to rise approximately 90% quarter-over-quarter in Q1 2026 alone, on top of the 48–53% surge already seen in Q4 2025. That compounds to nearly a tripling of prices in six months. Multiple industry analysts expect this supply constraint to persist well into 2027.

An example of how RAM prices have spiked recently. DDR4-3200 2x8GB has more than doubled in price over the past 6 months. For more charts and prices, see pcpartypicker.com.

Embedded Systems Get Hit The Hardest

The memory price spike is painful across the board, but it is disproportionately severe for embedded systems, mainly for two reasons.

First, the financial exposure. On a desktop or high-end consumer device, a cost increase is absorbed within a broad Bill of Materials (BOM). On an embedded device designed to hit a tight price point, even a $1–2 increase per unit can wipe out margins entirely. For a manufacturer shipping a million units, that is $1–2 million in lost profit per product line, per year. Furthermore, embedded platforms cannot transition to new memory standards as quickly as consumer markets, and DDR4 (now being phased out by manufacturers) remains essential for countless deployed designs.

Second, the lack of fallback options. Desktop and mobile operating systems can use virtual memory, swap space, and dynamic resource allocation across applications. Embedded systems typically run a single application with a fixed memory ceiling. When you hit that ceiling, there is no safety net. In safety-critical industries like automotive or medical, that isn't just an inconvenience: it can trigger costly recalls or compromise user safety.

There is also a supply availability risk beyond price. As flash memory in embedded systems becomes harder to source, manufacturers may find they cannot obtain the specific qualified component they need, forcing hardware redesigns or specification downgrades mid-cycle.

How Did We Get Here?

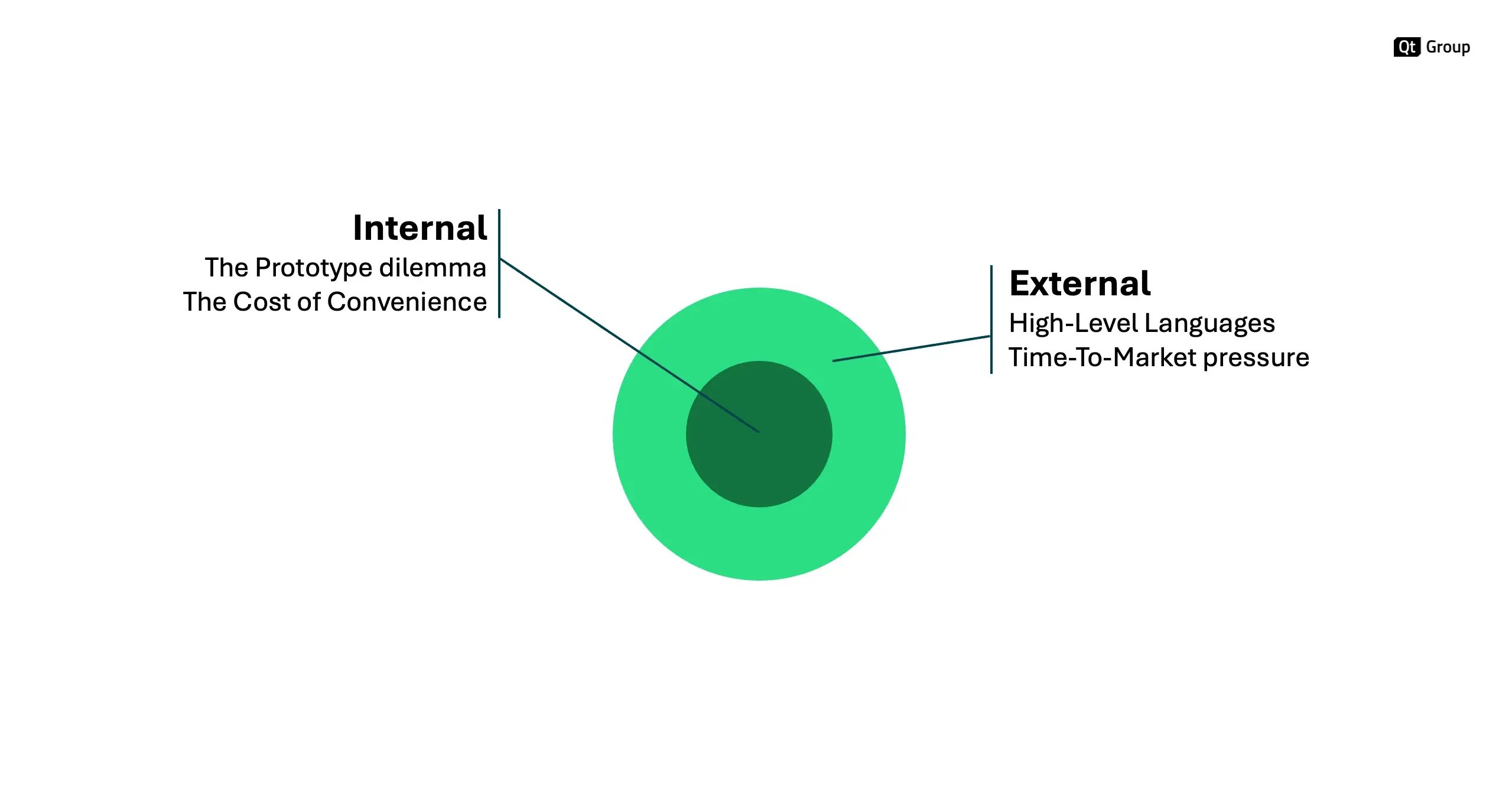

Market forces and the rise of AI technology are only part of the story. In many embedded projects, memory problems are also self-inflicted, the result of two common internal engineering patterns and two external pressures that are easy to underestimate.

The Internal Factors

On the internal side, the first is the prototype dilemma. When a team builds a proof of concept (POC) to validate an idea, they optimize for speed of development rather than efficiency of resource use. The problem is that market pressure rarely allows time to rebuild cleanly once the POC is validated. The prototype ships, carrying all the memory waste of what was essentially a development experiment.

The second is the cost of convenience. Modern package managers and component libraries make it trivially easy to pull in dependencies. Every added library expands the memory footprint, often invisibly, until you're well into development and discover you're running tight on hardware that's already been locked down.

Two internal and two external factors influence the importance of RAM in embedded systems development. These are key considerations if you're trying to reduce how rising RAM prices affect your bottom line.

External Factors

On the external side, technology stack choices made early in a project have long-term consequences that are easy to ignore at the time. Programming languages chosen for their accessibility and ease of onboarding can carry a significant runtime overhead. JavaScript is a common example: it lowers the barrier to entry, but its runtime weight means that performance-sensitive applications often end up relying on C++ or Rust libraries underneath to compensate. Choosing your language and framework is not just a developer productivity decision; it directly shapes your memory budget for the life of the product.

Finally, there is the pressure to ship. Release timelines create a constant tension between getting to market and getting the product right. Teams that don't build memory profiling and performance validation into their regular development cycle often discover problems too late to address them without costly rework. Knowing when a product is genuinely ready, across all criteria, including memory efficiency, requires deliberate discipline that time-to-market pressure tends to erode.

How To Save Memory Space In Embedded Systems

Switching to a lower-spec memory component sounds simple in theory, but in practice, it introduces hardware qualification risks, potential performance penalties, and further supply chain uncertainty. Software optimization, on the other hand, is a lever you can pull without touching your hardware design.

The impact can be substantial, and it starts with understanding where your RAM in embedded systems is actually going.

UI Assets Are Often The Biggest Culprit

Images and graphical resources can account for up to 80% of RAM usage during runtime. The choice between rasterized images (PNG) and vector graphics (SVG) involves a genuine trade-off: PNGs consume more RAM but less CPU at runtime; SVGs render via CPU at display time, keeping RAM usage lower. Neither is universally correct, as the right choice depends on your hardware profile.

What many teams don't realize is that compressed texture formats like ASTC can reduce RAM consumption by 75–85% compared to standard PNG, with near-zero CPU overhead, because the GPU decodes them directly. If saving memory space and power in embedded systems is a priority, evaluating compressed texture formats is one of the highest-leverage steps available.

| Standard (PNG/RGBA) | Compressed (ASTC/ETC2) | Impact on the "Crunch" | |

|

Flash Size (KB) |

100 | ~ 150 | Minor impact on NAND |

|

RAM Usage (KB) |

8,000 | ~ 1,000 to 2,000 | 75% to 85% RAM reduction |

|

CPU Load |

Low | Zero | Faster startup time |

|

Visual Quality |

Perfect | Near-Perfect | Excellent for 99% of UIs |

Compressing images with ASTC can cut RAM usage by up to 85% compared to standard PNG, with minimal effect on flash size and almost no CPU overhead.

Profiling Should Start On Day One

By the time a team runs memory profiling six months into development, the architectural decisions that drive memory usage are already set in stone. As IAR's engineering team puts it, the real value of RAM optimization is not measured in bytes: it's measured in options.

Establishing memory budgets, profiling tools, and automated regression testing from the start of the project is what separates teams that ship on the target hardware from those that scramble for a more expensive chip at the last minute.

Lazy Loading And Architectural Discipline Matter

Not everything needs to be in RAM at the same time. Reviewing what gets loaded, when, and whether it actually needs to be loaded at all is a straightforward audit that often yields meaningful savings.

Your Framework Choice Has Long-term Memory Implications

The runtime environment of your chosen language and framework contributes to your memory footprint in ways that are easy to underestimate early in a project. Choosing a framework that gives you fine-grained control over what gets included (and allows you to strip out libraries you don't need) matters more in a world of rising RAM prices.

What The Memory Crunch Means In Real Money

Abstract percentages don't tell the full story. Let's make it concrete, starting with one example from a Qt customer.

Verge Motorcycles, the Finnish electric motorcycle manufacturer, approached Qt with a critical memory issue in its StarOS HMI platform. As Tero Ohranen, their UX/UI Designer, described it, memory usage was creeping up over time, eventually maxing out the device and causing crashes.

At one point, their previous solution was consuming more than 4GB of RAM. After migrating to Qt, that figure dropped below 0.5GB, representing an 85% reduction delivered in six weeks without compromising the premium riding experience the brand is known for.

Verge Motorcycles switched to Qt and reduced memory consumption by 85% for the two-screen human-machine interface (HMI) on the TS Pro. Full story here.

Now consider what that 3.5GB reduction is worth today, compared to just six months ago.

Looking at LPDDR memory (a reasonable assumption for an embedded Linux system of such complexity), contract pricing for LPDDR4X was broadly available at around $3–4 per GB in mid-2025. By Q1 2026, LPDDR contract prices have nearly tripled from their mid-2025 baseline, putting the same memory in the $9–12 per GB range.

At mid-2025 prices, 3.5GB of saved RAM translated to roughly $10–14 per unit. At today's prices, that same saving is worth $30–42 per unit. Let's assume a manufacturer shipping 50,000 units annually, the difference between an optimized and an unoptimized memory footprint has gone from around $500,000–700,000 in annual BOM impact to $1.5–2.1 million.

The software optimization that was already a good idea in 2025 is now, financially, a different conversation entirely.

Note that these figures are illustrative estimates based on publicly reported LPDDR contract price trends. Actual per-unit costs vary depending on volume tier, contract structure, component generation, and sourcing conditions. You can verify specific numbers with your procurement contacts or component partners before including them in your BOM projections, ensuring the calculation is accurate for your use case.

Nonetheless, this is the compounding logic of the memory crunch: the worse the shortage gets, the more valuable every megabyte of software optimization becomes.

Another Case In Point: Volkswagen

The Verge story is not an isolated example. Volkswagen's California campervan project faced a similar situation. Tier-1 supplier Desay SV had built a control panel for the vehicle's 5-inch rear touchscreen, but the chosen HMI framework showed limitations: laggy scrolling, missing animation smoothness, and a memory footprint that didn't meet Volkswagen's requirements.

Desay SV migrated to Qt for MCUs on a Renesas RH850 microcontroller. The new solution was completed in a matter of weeks, reducing estimated memory consumption by 50% while simultaneously improving the user experience.

Volkswagen and Desay SV have built the control panel for the California campervan's 5-inch rear touchscreen with Qt. They reduced memory consumption by 50%. Full story here.

Building Resilience For The Long Term

The memory market will eventually stabilize, but even then, analysts expect the baseline price level to remain significantly higher than pre-2024 norms. The companies that emerge with healthy margins will be those that have built software efficiency into their product development culture, not as a crisis response, but as a standard engineering discipline.

That means three things at a strategic level.

First, flexibility over lock-in: your software stack should allow you to switch hardware vendors and memory types without a full redevelopment cycle, reducing your exposure to any single supplier's pricing.

Second, performance as a product strategy: efficient software means you can run the same experience on lower-spec, lower-cost hardware, a genuine competitive advantage when BOM costs are volatile.

Third, treat memory as a strategic resource from day one: the decisions made in the first weeks of a project (framework selection, asset strategy, profiling practices) determine your memory budget for the entire product lifetime.

Qt is built around exactly this principle: a framework designed to maximize performance across any platform, while giving teams the cross-platform flexibility to switch target hardware without starting from scratch.